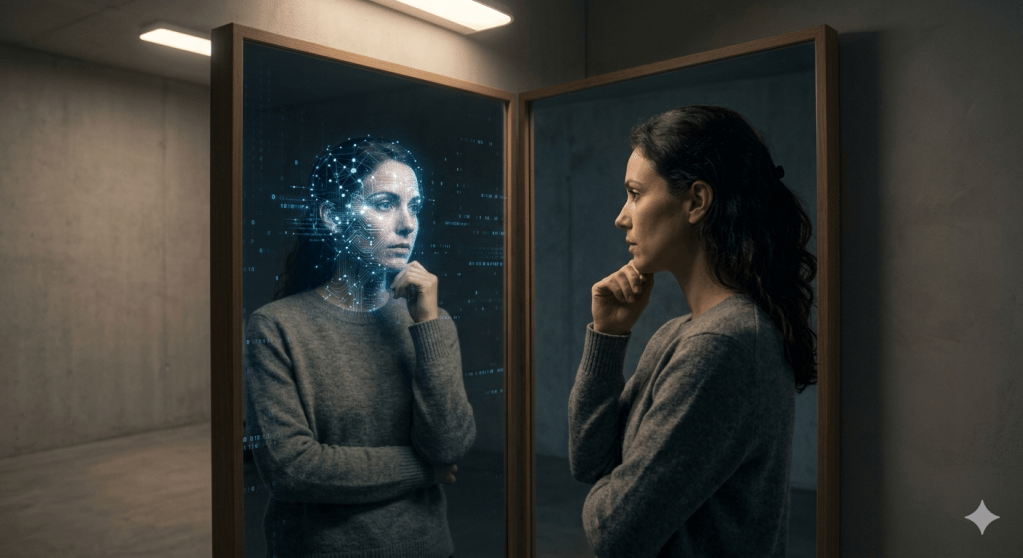

As systems begin to think and act, the real question is not what they can do—but how consciously we are building them.

We are building systems that can think and act.

But they do not know that they are doing it.

And that changes everything.

A service fails, and within seconds the system detects it, analyzes signals, decides what to do, and resolves the issue—often before anyone even notices. With the rise of agentic AI powered by models like Google Gemini, GPT-4, and open models such as LLaMA or Mistral, this is quickly becoming the norm.

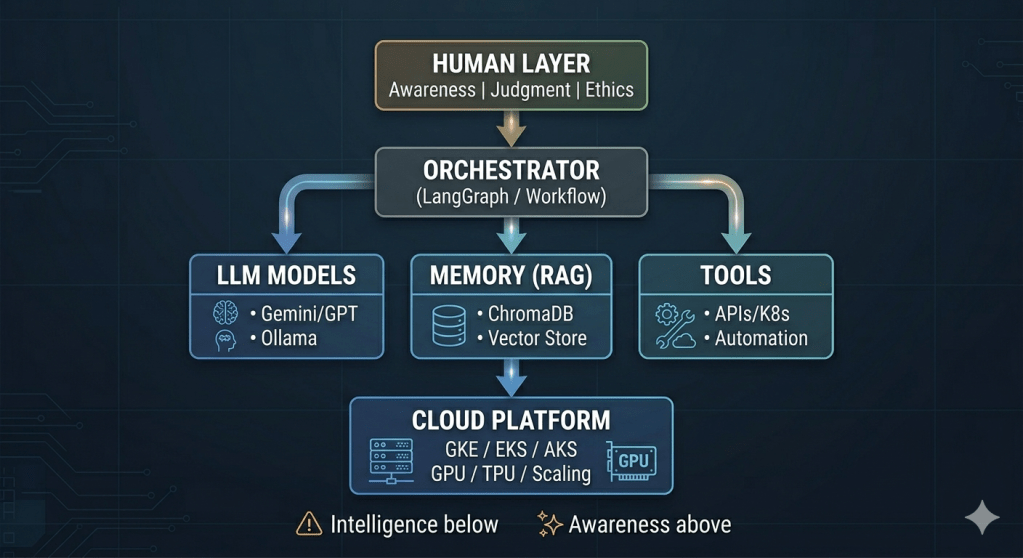

Under the hood, these systems are no longer simple pipelines. They are composed workflows—often orchestrated using frameworks like LangGraph, supported by memory layers such as vector databases, and executed across cloud platforms like Google Kubernetes Engine (GKE), Amazon Elastic Kubernetes Service (EKS), or Azure Kubernetes Service (AKS).

Agentic AI is not the rise of machine consciousness. It is the rise of intelligence without awareness.

And yet, despite all this sophistication, a deeper question remains:

Are we building these systems consciously, or are we simply building them fast?

Beyond Technology: What “Spirituality” Means Here

To understand why this matters, we need to step outside technology for a moment.

One way to do that is to borrow a lens we already understand well—human awareness.

What we often call “spirituality” is, at its core, the study of awareness, intention, and responsibility. These are not abstract ideas—they are the same qualities we rely on when making decisions in complex systems.

Using this lens helps us ask a better question: not just what can a system do, but how should it behave.

In many ways, building AI systems today is less about writing code—and more about understanding behavior.

Over time, I’ve found that the hardest problems in systems are rarely technical—they’re about understanding behavior, making decisions under uncertainty, and knowing where to draw boundaries.

When I use the word spirituality, I am not referring to religion. I mean something far more practical—qualities we rely on every day, often without naming them:

- Awareness of what is happening

- Clarity about why it matters

- Responsibility for what we do next

Across philosophies, one idea keeps returning:

We are not defined by our thoughts—but by our awareness of them.

Over the last two decades in infrastructure and platform engineering, one pattern has repeated itself:

Most failures are not caused by lack of technology. They are caused by lack of clarity.

As AI systems become more autonomous, that lack of clarity doesn’t stay contained. It scales—with speed, with reach, and with impact.

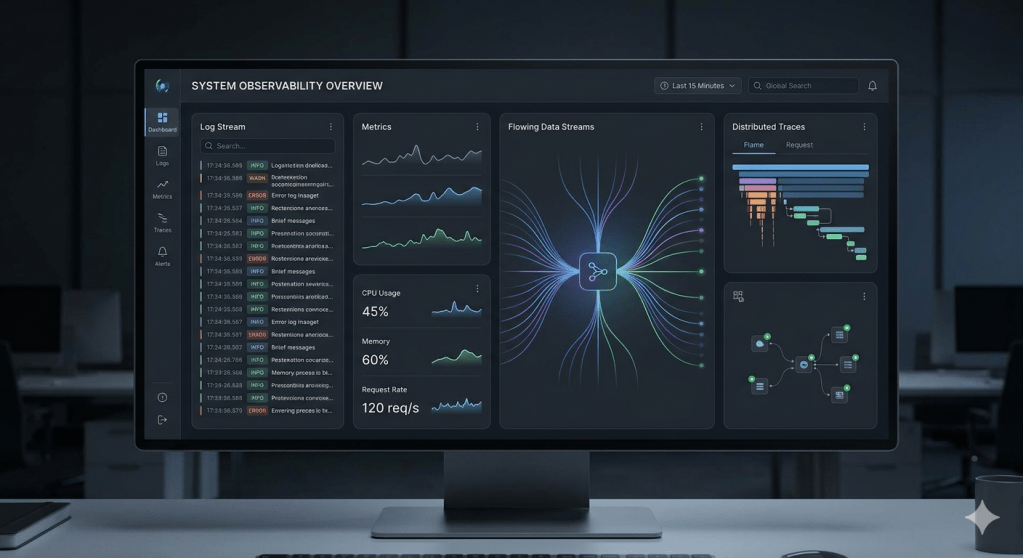

Awareness Comes Before Control

One of the simplest ideas—across philosophy, psychology, and engineering—is this:

You cannot improve what you cannot observe.

In systems, we call this observability.

In human terms, we call it awareness.

That is why we invest in logs, metrics, and traces. And in the world of AI, this extends further. Today, teams are increasingly relying on tools like Langfuse and prompt evaluation frameworks (such as Promptfoo) to trace:

- How prompts are constructed

- Which tools are invoked

- How decisions are made

- What responses are generated

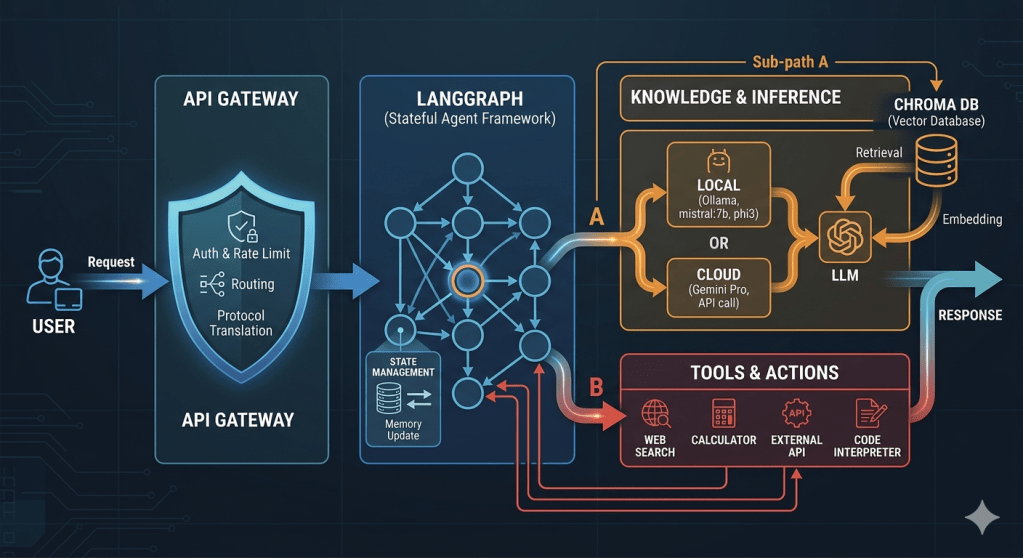

A typical agentic flow might look like this:

User → API Gateway → LangGraph → (ChromaDB + LLM via Ollama or Gemini) → Tools → Response

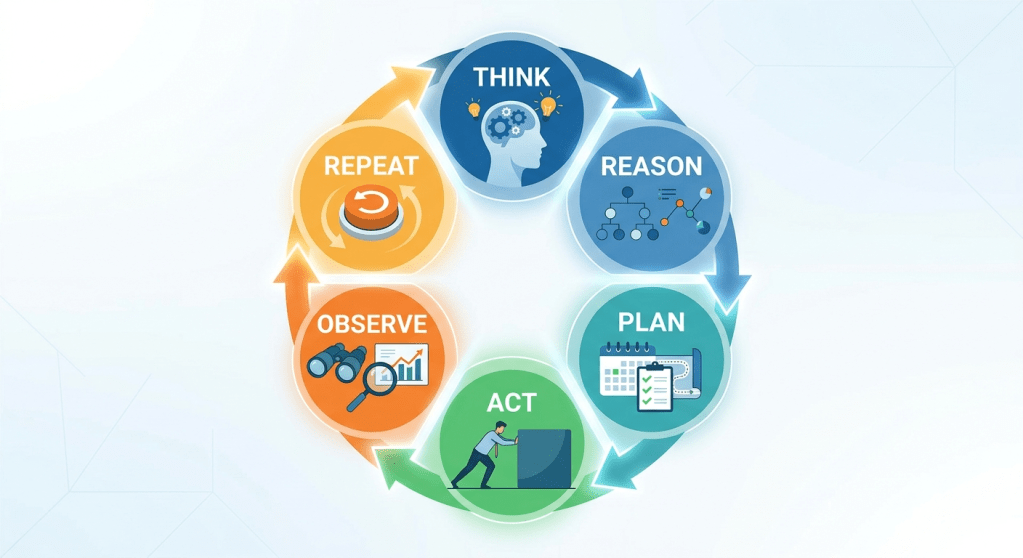

Internally, the system loops through:

Think → Reason → Plan → Act → Observe → Repeat

It looks intelligent. And often, it is. But without visibility into why something happened, we are left with a system that acts—but cannot be fully understood.

That is not intelligence. That is opacity.

A Simple View: The Conscious AI System

To make this more concrete, here’s a simplified view of how modern agentic systems are structured—and where awareness actually sits.

The lower part of this system—models, tools, orchestration—is where intelligence operates.

The upper layer—human awareness, judgment, and responsibility—remains outside the system.

And that distinction is what matters.

When Intelligence Acts Without Understanding

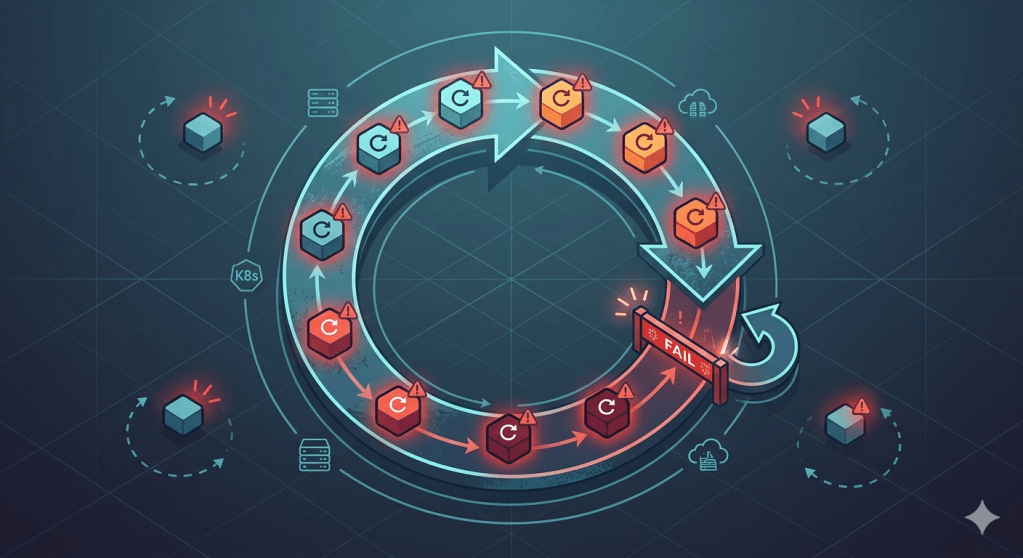

Consider a real-world scenario:

An AI-powered operations agent is managing workloads on Kubernetes—running on platforms like Google Kubernetes Engine (EKS) or Amazon Elastic Kubernetes Service (GKE).

A pod fails. The system detects it, inspects logs, and restarts it. At first, everything works as expected.

But if the failure is deeper—say a dependency issue or upstream outage—the system continues restarting the pod.

Soon:

- Logs begin to flood the system

- Resources are consumed unnecessarily

- Costs increase (especially in GPU-backed workloads)

- The real issue gets buried

Nothing is technically broken. The system is doing exactly what it was designed to do. And yet:

It does not ask, “Why is this happening?”

It does not decide, “This needs escalation.”

That is the difference between execution and understanding.

At this point, the system appears intelligent.

But this is where a deeper limitation begins to show.

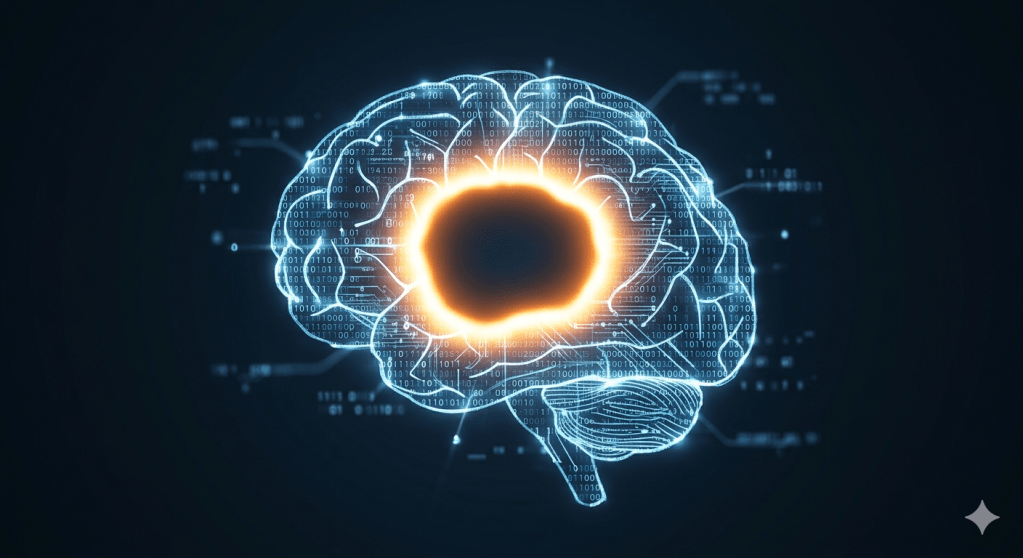

The Illusion of Intelligence

Large language models like Google Gemini or GPT-4 and open models such as LLaMA or Mistral can produce remarkably coherent outputs. But they are not “thinking” in the human sense. They are predicting patterns. That’s why we see:

- Hallucinations

- Confident but incorrect answers

- Sensitivity to phrasing

Even local runtimes like Ollama don’t change this fundamental nature—they only change where the model runs.

As Daniel Kahneman observed:

“A reliable way to make people believe in falsehoods is frequent repetition.“

AI systems can fall into similar patterns.

They generate answers. But they do not understand consequences.

Grounding AI: Bringing Context into the System

One of the biggest challenges with large language models is that they generate responses based on patterns—not on real-time truth. This is where grounding becomes essential.

In practical terms, grounding means connecting the model to reliable, contextual data instead of relying only on what it has learned during training.

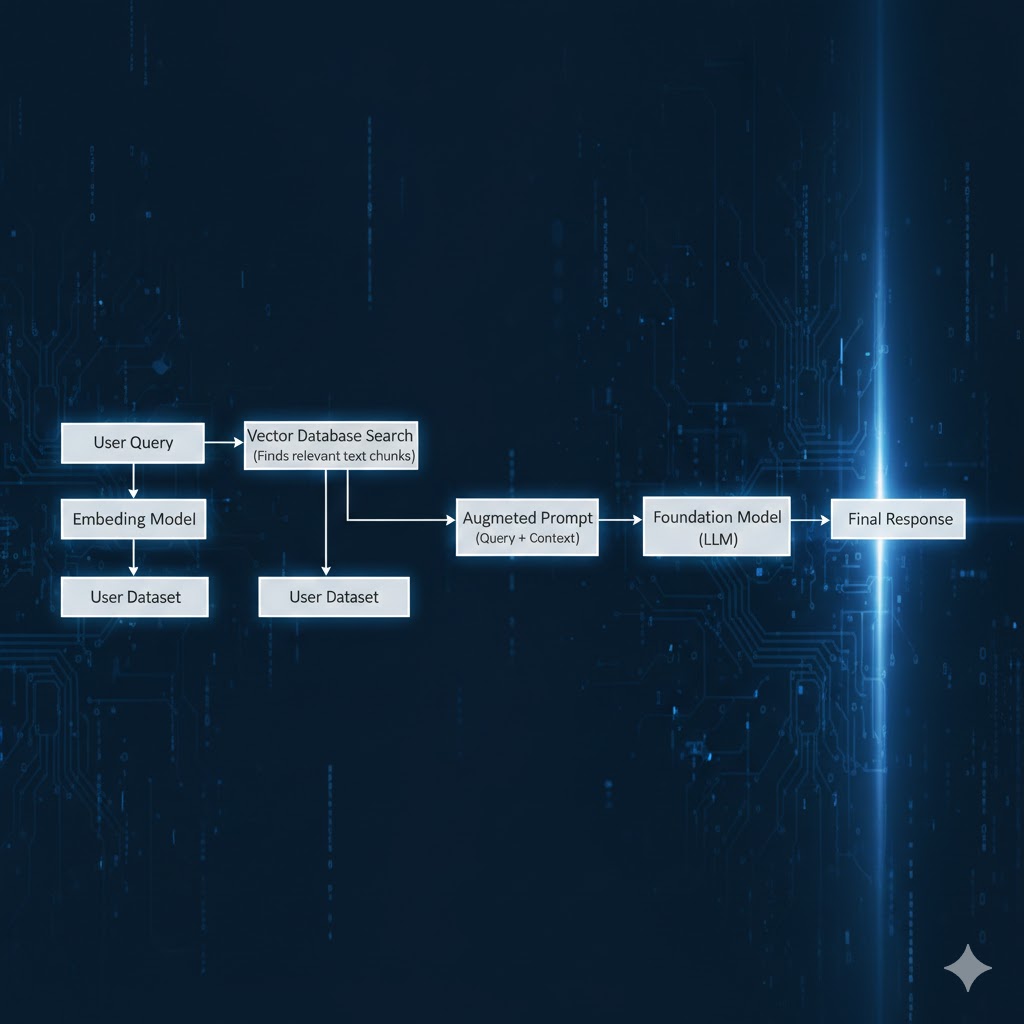

A common approach to this is Retrieval-Augmented Generation (RAG).

In a typical setup:

- The system retrieves relevant data from a source (logs, documents, databases, or knowledge systems)

- This context is passed to the model

- The model generates a response based on both its training and the retrieved information

In many modern architectures, this is implemented using:

- Context injection into prompts

- Vector databases (such as Chroma, Pinecone, Milvus, Weaviate or similar stores)

- Retrieval pipelines

Why This Matters

Without grounding:

- Models hallucinate

- Responses may sound correct but be factually wrong

With grounding:

- Responses become context-aware

- Outputs are more reliable

- Systems behave closer to real-world expectations

Grounding does not make AI aware.

Humans don’t just retrieve information—we interpret it.

AI retrieves information, but still does not know what it means.

But it brings it closer to reality.

RAG doesn’t give AI understanding—it gives it better context.

And context is often the difference between confidence and correctness.

Responsibility Is Where Design Becomes Human

As systems gain the ability to act, the real challenge is no longer capability—it is responsibility.

In practice, this means designing systems with:

- Guardrails and policy enforcement

- Approval workflows for sensitive actions

- Human-in-the-loop checkpoints

Because once deployed on scalable platforms—backed by GPUs, TPUs, and auto-scaling clusters—these systems don’t just act.

They act at scale. And scale amplifies everything:

- Good decisions

- Bad assumptions

- Unintended consequences

And once amplified, even small mistakes stop being small.

Intelligence now scales almost infinitely. Awareness does not.

The Architect’s Real Challenge

One of the hardest parts of building AI platforms is not technical. It is letting go of:

- Fixed architectures

- Tool preferences

- The idea of a “perfect system”

AI ecosystems evolve constantly. Frameworks like LangGraph will evolve. Observability tools like Langfuse will evolve. Models will evolve even faster.

The architect’s role is no longer to design something static.

It is to design something that can evolve—without losing control.

This is also where experience starts to matter more than tools.

Designing with Awareness

If there is one idea that ties all of this together, it is this:

Build systems that remain visible, guided, and accountable.

In practice, that means:

- Making system behavior observable

- Defining clear intent (prompts, policies)

- Controlling how actions are executed

- Keeping humans responsible for critical decisions

- Balancing performance with cost and sustainability

A Final Reflection

We often measure AI progress through model size, performance benchmarks, or response speed.

But a more meaningful question is:

Do we truly understand what we are building—and how it behaves?

Because in the end, these systems reflect:

- Our design choices

- Our assumptions

- Our level of awareness

As Albert Einstein said:

“We cannot solve our problems with the same thinking we used when we created them.“

Closing Thought

The future of AI will not be defined only by intelligence.

It will be defined by the quality of awareness behind it.

Because no matter how autonomous our systems become—

they will act.

But only we will understand.

In the next article, I explore a natural follow-up question:

👉 If AI isn’t aware, how do we trust it?

Read Part 2 here: [Link to Part 2]

Leave a comment